What is a User Click Aggregator? (And Why It Matters)

Every time you click on a product, an article, or a tweet, you're leaving behind a signal.

A User Click Aggregator is a system that captures those signals (clicks) in real time and helps organisations extract useful insights from them—especially over short time windows like the past 5 minutes, 1 hour, or 24 hours.

Let’s break that down.

Real-World Examples:

User Click Aggregators power many features we use daily. For instance:

📈 Bloomberg Terminal

Traders and analysts rely on the "most read financial news in the last 1 hour" to stay ahead of the market.🛒 Amazon

Their recommendation engine might highlight “Trending Products”—based on what users are clicking most right now.🐦 Twitter/X

Popular tweets or trending hashtags are often determined by how many users are engaging with them in real time.

So yes, this is a practical system design problem. And it's everywhere.

Functional Requirements:

Here’s what such a system must support:

Users can click on products, tweets, articles, etc.

Internal teams (like Amazon's product team) can query the top K clicked items in the last N hours.

Non- Functional Requirements:

The system must also meet serious technical demands:

High Scalability - Handle ~10,000 clicks per second.

Low Latency - Fast read queries are essential.

Data Integrity - No missed or duplicated clicks.

Fault Tolerance - Survive crashes and outages.

Realtime or Near-Realtime - Insights should reflect current activity.

Idempotency - Handle repeated clicks without duplication.

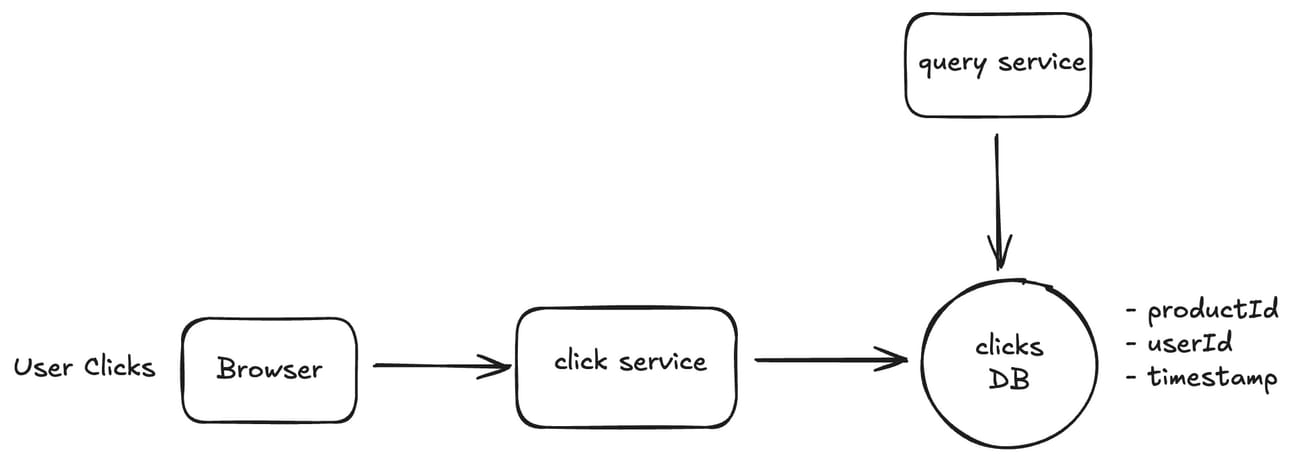

Now let’s being with an initial basic design for our use case:

The above is a very simple diagram which shows that a user clicks on a product in the browser which then send an HTTP post request to a click service which records that click in a clicks Database let’s a Postgres.

Furthermore we have a query service which provides functionality to query on the clicks DB. The query can look like following:

SELECT

productId,

COUNT(*) AS click_count

FROM

clicks

WHERE

timestamp >= NOW() - INTERVAL 2 HOUR -- Replace 2 with N for "last N hours"

GROUP BY

productId

ORDER BY

click_count DESC

LIMIT 10; -- Replace 10 with K for "Top K products"

Now this design largely coverts our core functional requirements but the non-functional requirements will not meet with this design Why you say ? It has following problems:

The read query will be slow since we have 10k clicks/s to support

User can click may click multiple times quickly on the same product and we may count them multiple times which will give wrong data

Let’s address these problems with an enhanced design:

Slow Query Issue:

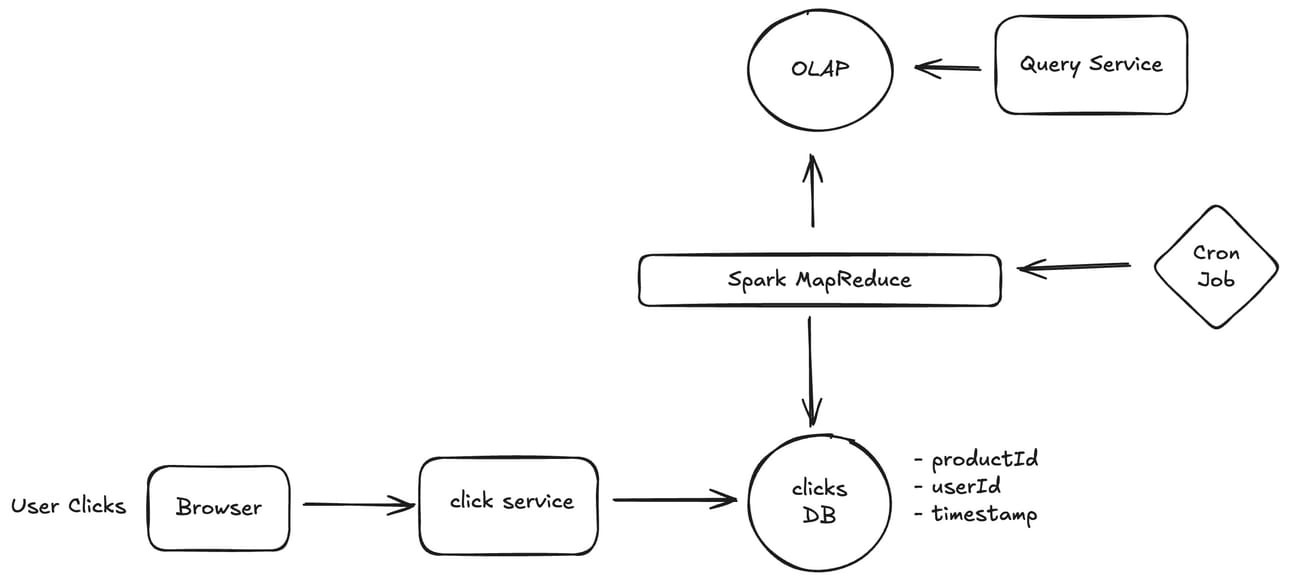

First we should use a write optimised database like Cassandra for storing the click events in place of a typical Postgres DB. This still doesn’t address the issue of slow queries.

Now to address the issue of slow queries we need a database which can handle aggregation queries much faster like an OLAP database (e.g. ClickHouse, Apache Druid)

Now the problem boils down to how do we push the data from Cassandra to our OLAP database which we will solve by introducing a layer of Batch processing using Spark MapReduce job.

Here’s the updated Design:

This design is better and solve the problem of slow queries.

You may ask why keep two separate database for reads & writes ?

Well, the simple answer to this is to maintain isolation for both operations so that the writes and read are not competing for resources.

But this design still has a problem. We solved the problem of slow-queries but we introduced a new problem of not giving an up to date real time view of data to queries.

Why? You may ask. Well since we are doing batch processing and let’s say the batch is of last 10 minutes or so. Till the time we dump that aggregated data of 10 minutes into the OLAP users will be getting un-updated results in their queries.

And It maybe that users want the latest data as much as possible.

So to solve this problem we need another solution.

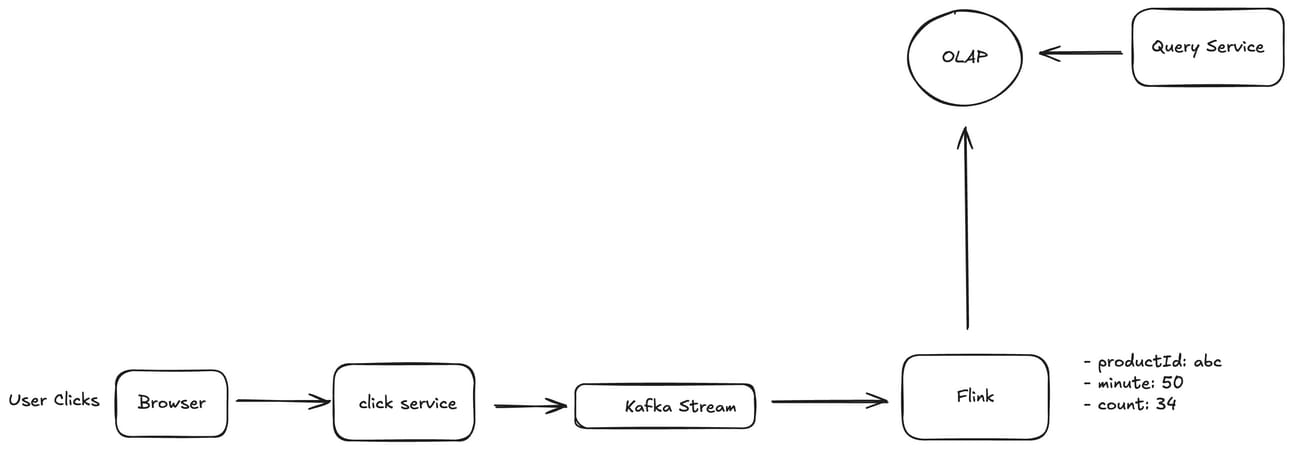

How about a stream processing solution ? Let’s see:

In this design we have removed the Batch processing model and introduced the stream processing which is much more real time.

We are using Flink which does in-memory aggregation at minute level and then dumps flushes that data to OLAP once the minute is over.

This gives us a much more real time view of the clicks aggregated data at a minute granularity.

Now this design solves both the problems of slow queries and real-time view of data.

Still let’s see what more we can do to improve the design.

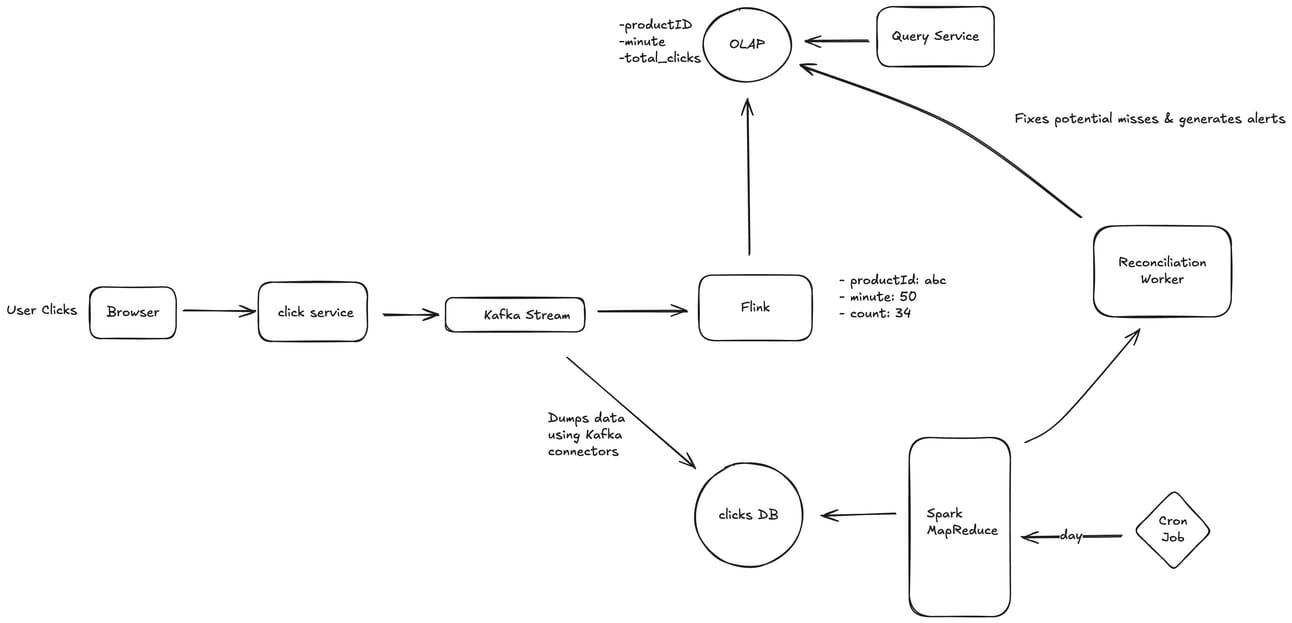

Is it possible to lose click data ?

Well let’s see. We know that Kafka is highly durable and fault tolerant and we can achieve that with right configurations.

But Flink can miss some click events if it went down for any reason.

To address this we will introduce the retention policy in Kafka to retain the click events for 7 days so when flink go up it picks up the missed events.

But still the data can still be inconsistent and we need to achieve high data integrity.

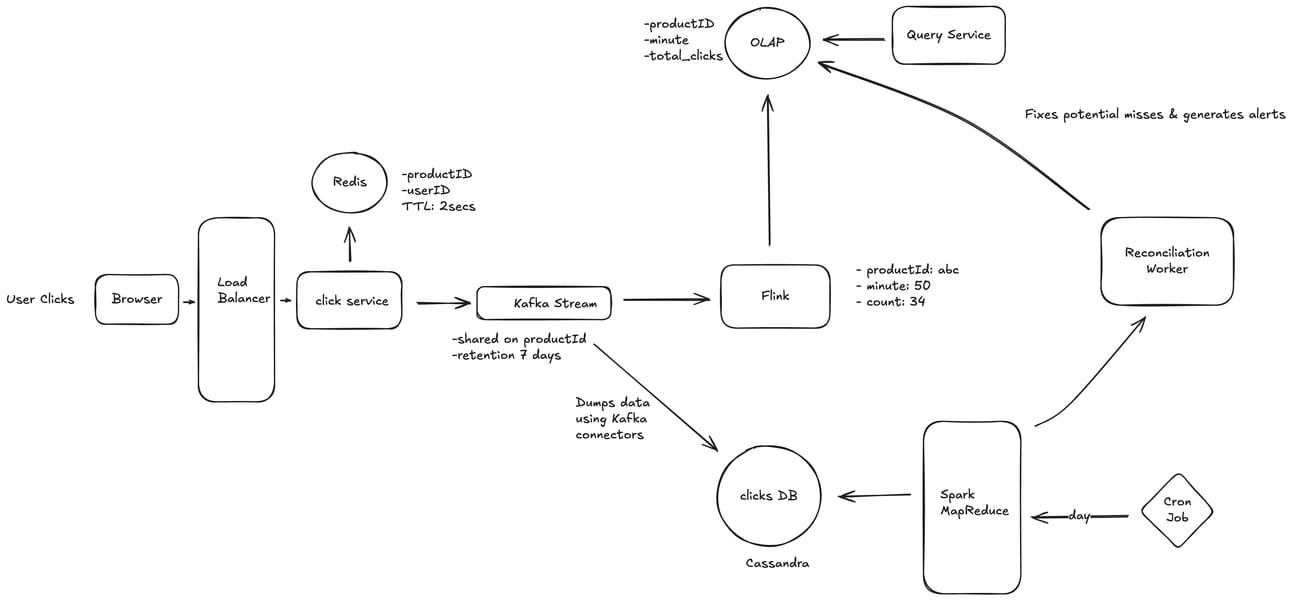

So will introduce a periodic data reconciliation component to our design using our previous batch processing model.

Here’s how:

Now this design looks good enough to supports all our functional and non-functional requirements.

Let’s what more we can do here :

For better scalability we introduce load balancer for processing click events.

Scale the click service horizontally

Shard the Kafka streams based on productId

Here one thing I should mention is we may have a hot short as a product may get clicked by many users so to address this check out the famous celebrity problem

We can assign one flink job to process data from each Kafka Shard

Idempotancy Issue:

What is meant by being idempotant in context of our problem ?

Well, user may able to click a single product multiple times in quick succession.

To solve this issue we can introduce a caching layer say Redis at the click service level where before pushing that click event to the kafka stream we will check if that productID,userID pair is found in the cache.

If it is found then we will ignore that click event otherwise will proceed ahead to kafka stream.

We will also keep the TTL (Time to live) of the data in Redis to be let’s say 2 seconds to allow the click event on the same product to be processed in a 2 second interval.

Here’s the updated design now:

I think with this we have covered almost all the things which are good enough for a production level click aggregation system.

No design is perfect though and it may still have a scope of improvement but this is as close as we can get to solve the problem.

Thanks for reading till here. I hope you found this useful and learnt something new. 😀

Until next time.